TIL: trying openAI's codex

i follow AI news and most of the news i consume comes from optimistic AI forums so i believe that we should have agi in the next 5 years. i know only basic python but have taken help of llms to code basic python scripts. openAI prompted me to try codex and so how could i reject.

setting up was fairly easy - i had vs code downloaded. i downloaded codex extension, logged in, then logged into github and it was on.

i gave it fairly easy scripts to write - parsing two blogsites to randomly scrape the text of 2 blogs and output it for me to read. and the agent was on. it did ask me for permissions to run code from the terminal but there is an option to give it general permission so it doesn’t ask for any and just keeps on going on its own. its not perfect - it made mistakes - like assuming i had some python library installed - but it find those mistakes on its own - and then corrected them.

i ran the first version - and it was only giving me the blogs that were visible in the homepage and ignoring those in the archive (the website is - (S Anand Sir’s Blog) . i told it that and it corrected it.

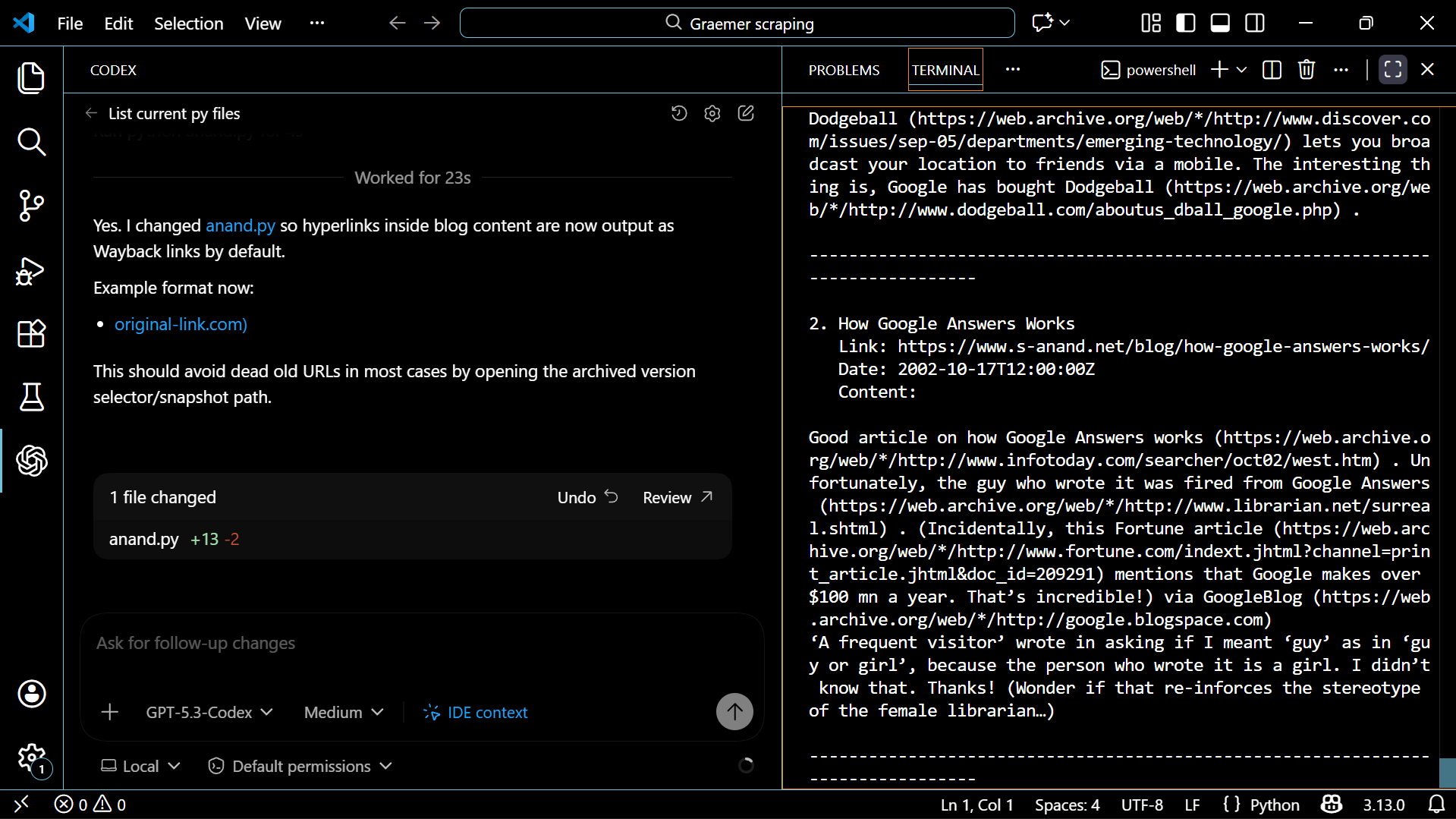

then i wanted the dates as well. so it did that. then i wanted the hyperlinks mentioned in the blogposts. so it did that as well. but the posts range back to 1999 and most hyperlinks gave 404 error. so i asked it to instead give me the wayback machine link. and it did that as well.

now yes my requests were probably trivial in the world of software engineering, but its amazing. magical.

any sufficiently advanced technology is indistinguishable from magic - Arthur C. Clarke

coding agents and llms can help us get a lot of stuff done. and fast.

but what i realise is that while i’m getting these done fast, i actually don’t know anything myself. if i didn’t have access to the llms, i wouldn’t be able to do anything. over-reliance on llms isn’t great. it makes life easy but actual learning requires doing hard things. that’s just neuroscience. what ai does to the skills of the white collar economy in the long-term is an intersting thought experiment. obviously if we get agi then we don’t need no skills.

difficult for the evolutionary naive brain to live in these times.